Environment

A Choking City: What the Ongoing Toxic Week in Delhi Means for its People

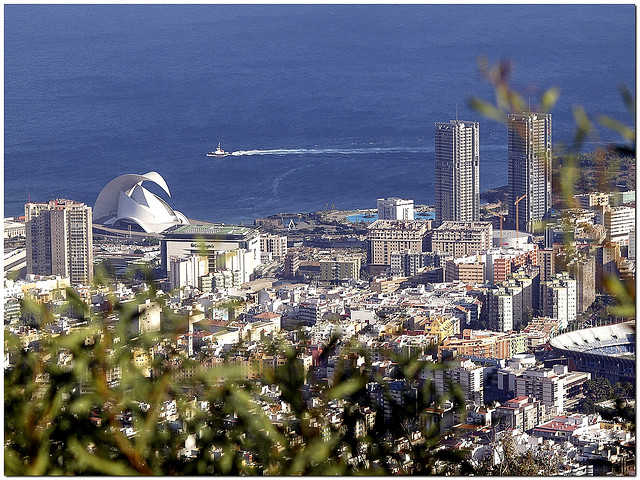

flickr/pamnani

A joke on the morbidity of New Delhi is circulating among Delhiites (people from Delhi) that while the lives of the citizens were disrupted in November last year due to ‘note-bandi’ (ban on currency), November of this year presents an even tougher test for the people with ‘saans-bandi’, a ban on breathing. The receding autumn or advent of winter was a once beloved season of a good number of people in the city who welcomed the change with a complete revamp of their wardrobes with colourful woollens. It is now characterized with bleak skies, an air of gloom and a little bit of grey in everything you see outside of your house.

For the past three days, I have been acutely aware of the air I am breathing, felt unproductive and apprehensive in spells for no good reason, and felt the need to confine myself to my house for as long as possible. These are some of the less apparent effects of the thick blanket of smog that has engulfed the national capital region. As a number of people donned with different types of masks on the roads and on Snapchat serve as a constant visual reminder of how the city is choking, a flurry of articles and news updates have presided over my feed. One of them included a horrifying viral video recording of vehicles ramming into each other due to poor visibility on the Noida-Agra Expressway as people scrambled to get themselves and their children out of the way, while some other articles argued about how currently breathing in Delhi for a day is the equivalent of smoking twenty cigarettes.

A sudden state of emergency

Less than two days ago, when the air quality in Delhi visibly worsened, Chief Minister Arvind Kejriwal likened the city to a ‘gas chamber’. The PM 10 and PM 2.5 levels in different parts of the capital have rocketed above the levels that are considered safe, and the Safar (System of Air Quality and Weather Forecasting And Research) has declared the air quality as ‘severe’ for at least the next three days after which the level may drop to a not so safe either ‘very poor’ level. In some parts of the city, the AQI (air quality index) was detected on monitors at 999, the highest possible reading, which suggests that the level might be even higher. The visibility during the early hours has also dropped to very low levels. Among the different reasons for the observed level of pollution in Delhi, slow winds at this time of the year have been identified as the prime contributor along with stubble burning by farmers in the neighbouring states of Punjab and Haryana. Combined with the dust particles present in the air, omissions from vehicles that plague the roads in the region throughout the day, and those from factories and construction activities, these factors dictate a recipe for creating uninhabitable conditions.

Making amends: A scramble for order

The Indian Medical Association on 7th November declared Delhi to be in a state of public health emergency, urging the Delhi government and other bodies to take adequate steps to ensure minimum risk to citizens, especially young children and the elderly, who are most likely to suffer from the effects of pollution. After a worsening situation, the government has ordered all schools in the capital to remain shut till Sunday, and has rolled out plans to implement the odd-even scheme for vehicles in the city from next week. Parking fee throughout the city has also been increased fourfold and the prices for travel by the metro have been substantially reduced for the time being to promote the use of public transport. The National Green Tribunal (NGT) has also banned all construction and industrial activities till November 14 in a bid to provide the citizens of Delhi a breath of better quality air. Mr. Kejriwal has also approached his counterparts from Punjab and Haryana over the issue of stubble burning by the farmers but it remains to be seen how the move plays out in the coming days.

As the government battles against the situation, the public is taking measures to protect themselves in whatever way they can. An increasing number of doctors and specialists on the matter have advised people not to go out for morning walks or outdoor activities so as to not inhale excessive quantities of toxic pollutants. Some doctors have even advised their patients to leave the city for the time being if possible. Air purifiers for houses and masks for travelling outside have seen a huge rise in sales as nearly everyone has become an expert on the subject of filters and N95 and N99 have become trending words from pharmacies to WhatsApp conversations.

A year ago, while New Delhi wrestled with more or less the same conditions, UNICEF had called on the rest of the world to consider the situation as a wake up call. “It is a wake up call that very clearly tells us: unless decisive actions are taken to reduce air pollution, the events we are witnessing in Delhi over the past week are likely to be increasingly common”, it had said in a statement. If we are doing better than last year, it is still not enough, and all one needs is less than a minute in the open to be convinced of that. As the world battles with the effects of climate change, India’s bid to have a major global footprint in the coming decades is bound to take a serious hit if so many of its cities, and especially its capital follow a trend of being unlivable for a chunk of time at the end of every year.

Environment

The Future of Fashion: The Rise of Eco-Conscious Brands in the Luxury Market

The once opulent and exclusive realm of luxury fashion is undergoing a dramatic transformation. Driven by a growing global consciousness about environmental impact, consumers are demanding more sustainable choices, even at the highest price points. This shift in consumer preferences is reshaping the industry, forcing luxury brands to reevaluate their production processes and material sourcing.

As a result, luxury eco-friendly collections are becoming increasingly sought after, and brands that prioritize sustainability are gaining a competitive edge.

Key Trends Shaping the Market

The luxury fashion market is experiencing a significant shift as sustainability becomes a core value for both brands and consumers. One of the most prominent trends is the rise of eco-friendly fashion that blend high-end design with ethical practices.

These collections are characterized by the use of sustainable materials, such as organic cotton, recycled fabrics, and innovative alternatives to traditional textiles. Brands are also focusing on reducing their environmental impact by adopting eco-friendly production methods, including water-saving technologies and carbon-neutral manufacturing processes.

Brands like Onibai are at the forefront of this movement, offering exquisite designs that not only cater to the aesthetic tastes of discerning customers, but also align with their values of sustainability. As consumers become more aware of the environmental and social implications of their purchases, they are increasingly seeking out brands that offer a blend of luxury and responsibility.

Consumer Demand Driving the Change

Consumer preferences are increasingly dictating the trajectory of the fashion industry. A growing emphasis on sustainability and ethical practices has empowered consumers to demand more from the brands they support. This shift in consumer behavior has led to a surge in demand for luxury eco-friendly products, forcing fashion houses to adapt their business models accordingly. This demand for transparency and ethical practices has compelled luxury brands to rethink their strategies and adopt more sustainable business models.

For example, a recent study by McKinsey & Company found that 66 % of global consumers are willing to pay more for sustainable products. This trend is particularly strong among millennials and Gen Z consumers, who are more likely to be environmentally conscious.

Leading the Way: Eco-Conscious Luxury Brands

Several luxury brands have emerged as leaders in the sustainable fashion movement, setting a precedent for the industry. Onibai, for instance, has distinguished itself with its commitment to sustainability, offering luxury eco-friendly collections that resonate with environmentally conscious consumers.

By prioritizing the use of organic materials, low-impact dyes, and fair labor practices, Onibai exemplifies how luxury can coexist with ethical responsibility.

The Future of Luxury Fashion

The rise of eco-conscious brands in the luxury market marks a significant turning point for the fashion industry. As more brands embrace sustainability, the definition of luxury is evolving to encompass not only quality and craftsmanship but also ethical responsibility. This shift is not just a passing trend; it represents the future of fashion, where consumers and brands alike recognize the importance of preserving our planet while enjoying the finer things in life.

In this new era of luxury fashion, eco-friendly collections like those offered by Onibai are leading the way, proving that sustainability is not a compromise but a new standard of excellence. As the demand for sustainable fashion continues to grow, the future of luxury will undoubtedly be defined by its commitment to eco-consciousness, ensuring that elegance and ethics go hand in hand.

Environment

Redefining marine recycling through painting and sculpture

Frutos María Martínez, a self-taught visual artist, has been rescuing waste from the ocean’s waters for decades, turning it into beautiful and elegant artwork. Finishing his first painting and sculpture pieces at just fourteen, Frutos has spent his entire life guided by his passion for art. Becoming a professional artist in the mid-1980s after working at car dealerships, Frutos used his skills and expertise with metal to create sculptures and paintings, inspired by the materials he found along the Mediterranean Coast.

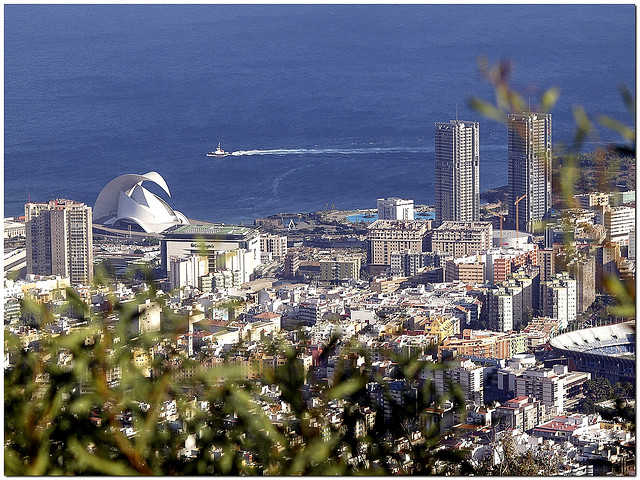

In his pieces, Frutos combines numerous medias to create sculptures, paintings, and collages. As part of his process, María spends time exploring the beaches and waters of Alicante, a Mediterranean city along the southeastern coast of Spain and his home since 1985.

Here, he has found all sorts of materials that have gone on to become pieces in his collections. Steel, iron, wood, nets, and textiles, among other objects, that Frutos salvaged from the ocean can all be found in his art.

By reusing and recycling these found objects, the artist is able to give new life to abandoned and forgotten waste. María recognizes the environmental issues we are facing at a global level, and his art seeks to raise awareness of these challenges. As his materials are pulled straight from the Mediterranean Sea, he is especially invested in taking care of marine life and our oceans. With each item the artist salvages from the ocean, one less piece of waste is polluting the waters.

Once the flotsam is collected, Frutos returns to his workshop where he creates works of art in different forms. While other artists may send their work to be fabricated by others, María could not imagine his pieces being created in a place other than his studio. Here, he uses his innate skills with metal and machinery to forge and construct works of beauty. His sculptures follow hard lines, both straight and curved, and his paintings exude color. When making his collages, he takes his found mixed media and creates new stories. He adds paint to wood that was floating in the sea or combines various materials together, reimagining a life for objects that were once trash.

With a true passion for his work, he creates through intuition. He explains that there is a moment when his art becomes completely emotional and personal, “each piece is imbued with my experiences and emotion, all lumped together, all conveyed by means of materials, technique, design and imagination.” The works his hands awaken and renew are the convergence of his mastery and his spirit, bringing new life to discarded objects.These fascinating pieces have been shown in numerous exhibitions, most recently at the Museum of the University of Alicante. There, the artist presented Acero y pecios del mar (Steel and Sea Wrecks), where two collections were displayed jointly, one offering sculptures focused on steel as a material and the other called Nueva Vida, or New Life, a collection whose pieces were created by recycling materials salvaged from sea wreckage. With this collection, he introduced a new layer into his work, adding an element of chaos and destruction to the backstories of his materials.

An earlier exhibition, Janus, displayed over 40 of Frutos’ sculptures on the University of Alicante’s campus. This exhibit, named for the Roman god of doors, gates and transitions, was influenced by the duality of life, of beginnings and ends, and of old and new. While his finished pieces reflect this duality, the materials used to create them manifest this theme through their first death being revived into new life, a tangible and concrete example of the contrasting polarity he was inspired by.

As with his sculpture-centered exhibitions, his shows highlighting his paintings are full of found materials. Pieces that Frutos creates hang with the weight of rescued and recycled materials, such as rusty iron, steel, and wood. This media has been taken from the ocean and included in his art, conjoining with resin, sands, and paint in bold and striking colors.

Frutos María’s ability to not only find new meaning in recycled and salvaged objects, but to clean up the oceans and make the environment less polluted, translate through his moving pieces of artwork, and because of this, he has made a name for himself in the art world.

Environment

Wind energy, the best way to invest into renewable energies

Over the last few years, wind energy has become the type of energy everyone can not stop talking about. This type of energy brings lots of benefits into the table when compared to more traditional sources of energy, like the energy proceeding from radiation or charcoal. Wind energy is cheap to produce, the most efficient renewable energy, and, most importantly, it is an ecological sensitive alternative.

Why should you invest in wind energy?

Wind energy is the energy of tomorrow. This type of energy made their big appearance during the XX century, when wind turbines would be used to bring energy to areas located far from the electricity grid, such as isolated farms, houses or factories.

During the XXI century, wind energy’s popularity kept on rising. Wind energy is as cheap to produce as traditional sources of energy, like radiation or charcoal burning, while falling into the category of renewable energies. This made wind energy become a top contender in the energy industry.

The wind industry’s future looks to be brighter than ever. The current generation is pretty aware of pollution and the effect it has on climate change. This has caused that governments all around the world start promoting new legislations and campaigns promoting renewable energy and, since wind energy is the most efficient type of renewable energy, it is expected that it will become the main source of energy by 2030. Now is the best moment to jump into the wind energy trend!

Making sure you set up an efficient wind farm

As it has been previously stated, wind energy is definitely an option you should consider if you are looking to power up any of your properties or business. However, setting up a wind farm isn’t a small investment, therefore, before starting this process we need to gather all the information we can about its viability. That is why we can not stop recommending that you consult with professional companies like Vortex FDC.

Vortex FDC is a company that has made a name for itself in the wind industry sector. Vortex assists their customers with all the information regarding wind resource they could need.

For example, an important factor we need to consider before setting our wind farm is the terrain. Not all terrains are appropriate to locate a wind farm, therefore, the terrain would need to be assessed before an installation that could result in a waste of assets if the terrain is not appropriate for it. Vortex FDC helps their customers evaluate where the wind farm is going to be placed, and providing information about the wind to choose the type of turbines that would yield better results in that area. Factors like the type of wind (extreme winds, turbulences, etc) or the temperature need to be taken into account when deciding which type of turbine would be more suitable for our wind farm. For example, if we plan to build a wind farm in an area that gets freezing and snowy in winter, we would need to get a turbine system that is cold resistant.

Vortex FDC runs a numerical weather model to feature all the variables that could affect the production levels of your wind farm, regardless of it being situated off-shore or in-shore. Thanks to their always up-to-date technology, you will be able to avoid unpleasant surprises regarding the energy production of your wind farm.

Overall, opting to use wind energy is an amazing idea that will benefit not only our budget, but also the planet. But, in order to do so, we need to make sure that wind farms are viable, and for that, we need to rely on professionals like Vortex FDC.

-

Travel11 months ago

Travel11 months agoEnjoy a luxury holiday in Zanzibar

-

Culture and Lifestyle10 months ago

Culture and Lifestyle10 months agoDo you want to surprise a special someone?

-

Business6 months ago

Business6 months agoHow To Future-Proof Your Business With The Right Tools

-

Travel4 months ago

Travel4 months agoTravelling from San Antonio to Guadalajara

-

Business10 months ago

Business10 months agoServiceNow Development Consultancy: Business Process Automation as Disruptive Technology

-

Environment11 months ago

Environment11 months agoThe Future of Fashion: The Rise of Eco-Conscious Brands in the Luxury Market

-

Business9 months ago

Business9 months agoThe importance of telescopic handlers: innovation and efficiency in load handling

-

Travel9 months ago

Travel9 months agoDiscover extraordinary places and enjoy unique experiences